A few years ago, most scams were easy to notice. The email looked strange, the message was full of spelling mistakes, and the caller sounded suspicious. In 2026, that is no longer always true because scammers are using AI tools to write better messages, clone familiar voices, and create fake images, videos, and audio that feel real at first glance.

That is what makes AI scams and deepfakes so dangerous. They do not always rely on bad design or obvious red flags. Instead, they rely on speed, emotion, and trust. A scammer may pretend to be your bank, your boss, a delivery company, or even a family member in trouble. If you react quickly without verifying the message, that is when the scam works.

The good news is that you do not need to become a cybersecurity expert to protect yourself. You just need to slow down, know the warning signs, and use a few verification habits before clicking, paying, or sharing personal information.

What AI scams look like now

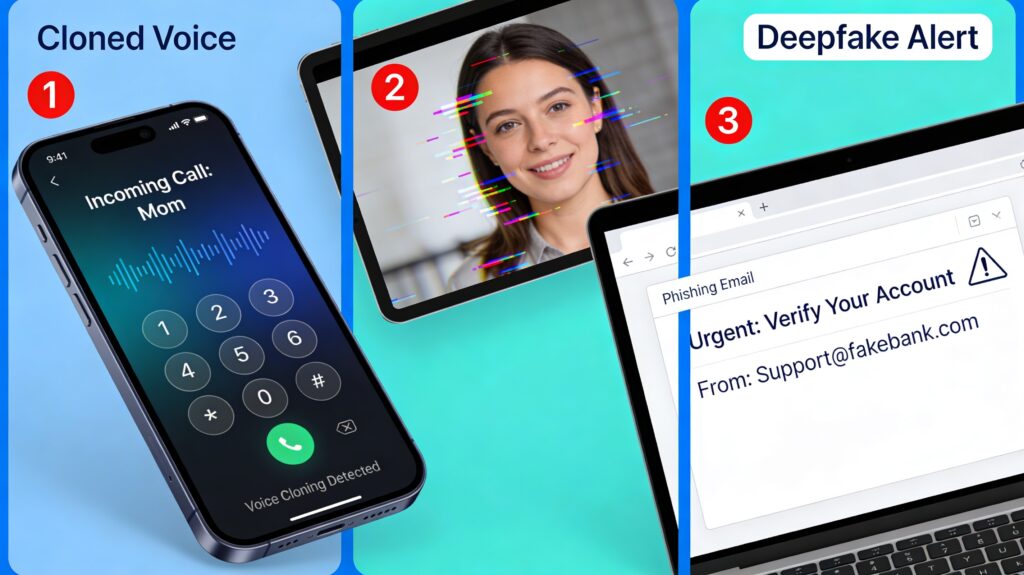

Modern AI scams usually fall into a few common categories. One of the biggest is voice cloning, where a scammer uses a short sample of someone’s voice to create a fake call that sounds real. The FTC has specifically warned that criminals may clone a family member’s voice, claim there is an emergency, and pressure you to send money immediately.

Another growing threat is deepfake video or audio impersonation. These scams can make it appear that a real person said something they never said. The FBI has also warned about AI-generated voice deepfakes being used in impersonation campaigns to gain trust and steal information.

Then there is AI-written phishing, which is harder to detect than older scam emails because the writing sounds more natural and polished. Instead of broken grammar, you may now get a message that looks professional, urgent, and highly personalized.

Why people fall for them

Most people do not fall for scams because they are careless. They fall for scams because the scam is designed to trigger emotion before logic. Fear, urgency, embarrassment, curiosity, and authority are still the strongest tools scammers use. AI simply helps them deliver those tricks in a more convincing way.

For example, imagine getting a call that sounds exactly like your child saying they lost their phone, got into trouble, or need money right away. In that moment, most people react emotionally first. That is why voice cloning is so effective.

The same thing happens with fake customer service, fake Google-related alerts, or urgent account warnings. If the message feels official and arrives when you are distracted, tired, or in a rush, the scam has a better chance of succeeding.

10 signs it may be an AI scam

Here are the most useful warning signs to watch for:

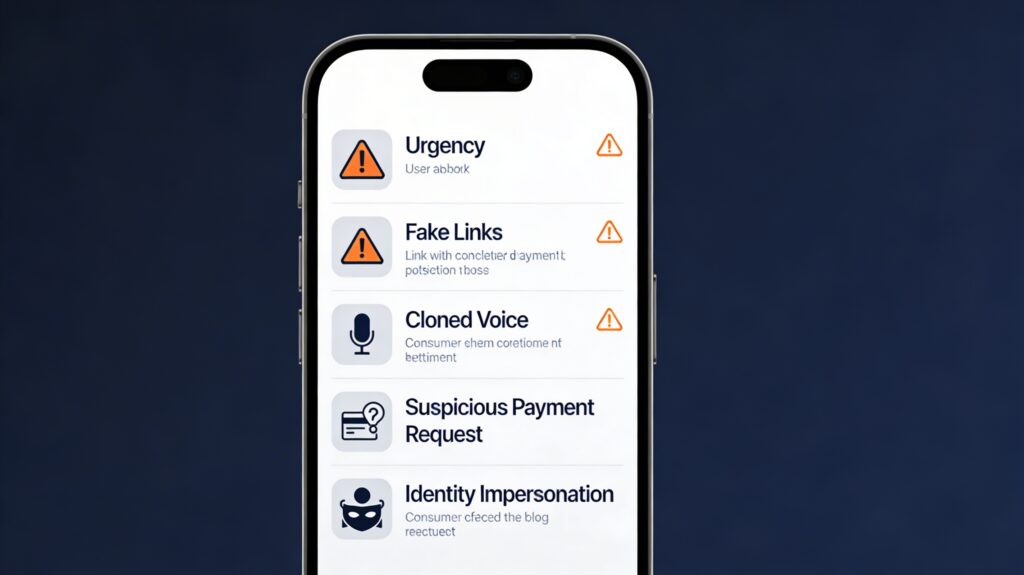

- The caller or message creates extreme urgency, such as “do this now” or “something bad will happen today.”

- Someone asks for gift cards, crypto, wire transfers, or unusual payment methods.

- A voice call sounds familiar, but the person refuses a normal verification question.

- A video or voice message feels slightly unnatural, overly smooth, delayed, or emotionally flat.

- An email or text looks polished but pushes you to click a link or share login details fast.

- A supposed support number appears in search results or AI summaries, but it is not confirmed on the company’s official website.

- The message asks you to keep the issue secret from friends, relatives, or coworkers.

- The sender uses authority, such as pretending to be a bank, government office, or executive.

- The account, caller ID, or profile looks real but has tiny inconsistencies in names, handles, or URLs.

- You feel rushed, scared, or pressured before you have time to think. That emotional pressure is often the biggest red flag of all

How to verify before you trust

The best defense against AI scams is a simple rule: never trust, always verify. If you receive a strange call, voice note, or urgent message, pause before responding.

If it sounds like a family member or friend, hang up and contact them using a number you already know, not the number that just called you. The FTC advises consumers to use a second method of contact and to consider creating a family password or verification phrase that a scammer would not know.

If the message claims to be from your bank, Google, a courier, or a customer support team, do not click the link in the message. Open the official website yourself, or use the app you already trust. This simple habit blocks a huge number of phishing scams.

How to spot deepfake audio and video

Deepfakes are getting better, but many still leave clues. In video, look for lip movements that do not perfectly match the words, strange blinking patterns, awkward facial edges, or lighting that changes unnaturally across the face. In audio, listen for tone that feels too flat, too smooth, or oddly paced, especially in emotional situations where a real person would sound more natural.

Still, the safest approach is not trying to become a human lie detector. It is using verification habits. Even if a video looks real, you should confirm surprising claims through trusted news outlets, official accounts, or direct contact with the person involved.

This matters because the next generation of scams may be less about obviously fake content and more about believable content delivered at the perfect stressful moment.

Practical habits that keep you safe

If you want to reduce your risk quickly, start with these habits:

- Set a family safe word for emergencies.

- Never send money because of a voice note or urgent call alone.

- Enable two-factor authentication on your key accounts.

- Use a password manager and unique passwords for email and banking.

- Avoid calling customer service numbers found in random posts, ads, or unverified search results.

- Double-check any surprising claim before sharing it on social media.

- Talk to older family members about voice cloning and impersonation scams, because trusted-voice fraud often targets emotion and confusion.

These habits are simple, but they are powerful because they interrupt the scammer’s biggest advantage: your immediate reaction.

What to do if you think you were targeted

If you think you interacted with an AI scam, act fast. Change affected passwords immediately, especially your email password, because email access often leads to other account takeovers.

If you shared banking or card details, contact your bank or card issuer right away. If you sent money through a scam method, report it as quickly as possible because recovery chances are usually better when you act early.

You should also report the scam to your local consumer protection or fraud reporting agency. Reporting helps platforms and authorities track patterns, warn others, and sometimes remove fake accounts or infrastructure faster.

The real rule for 2026

The biggest online safety skill in 2026 is not technical knowledge. It is learning to pause. AI scams work because they make fake messages feel immediate, personal, and believable. The more convincing the content becomes, the more valuable your verification habits become.

So if a call, video, or message pushes you to act right now, take that as your signal to slow down. Verify the source, use a second channel, and trust your process more than your panic. That one habit can save you money, protect your accounts, and stop a convincing fake before it fools you.